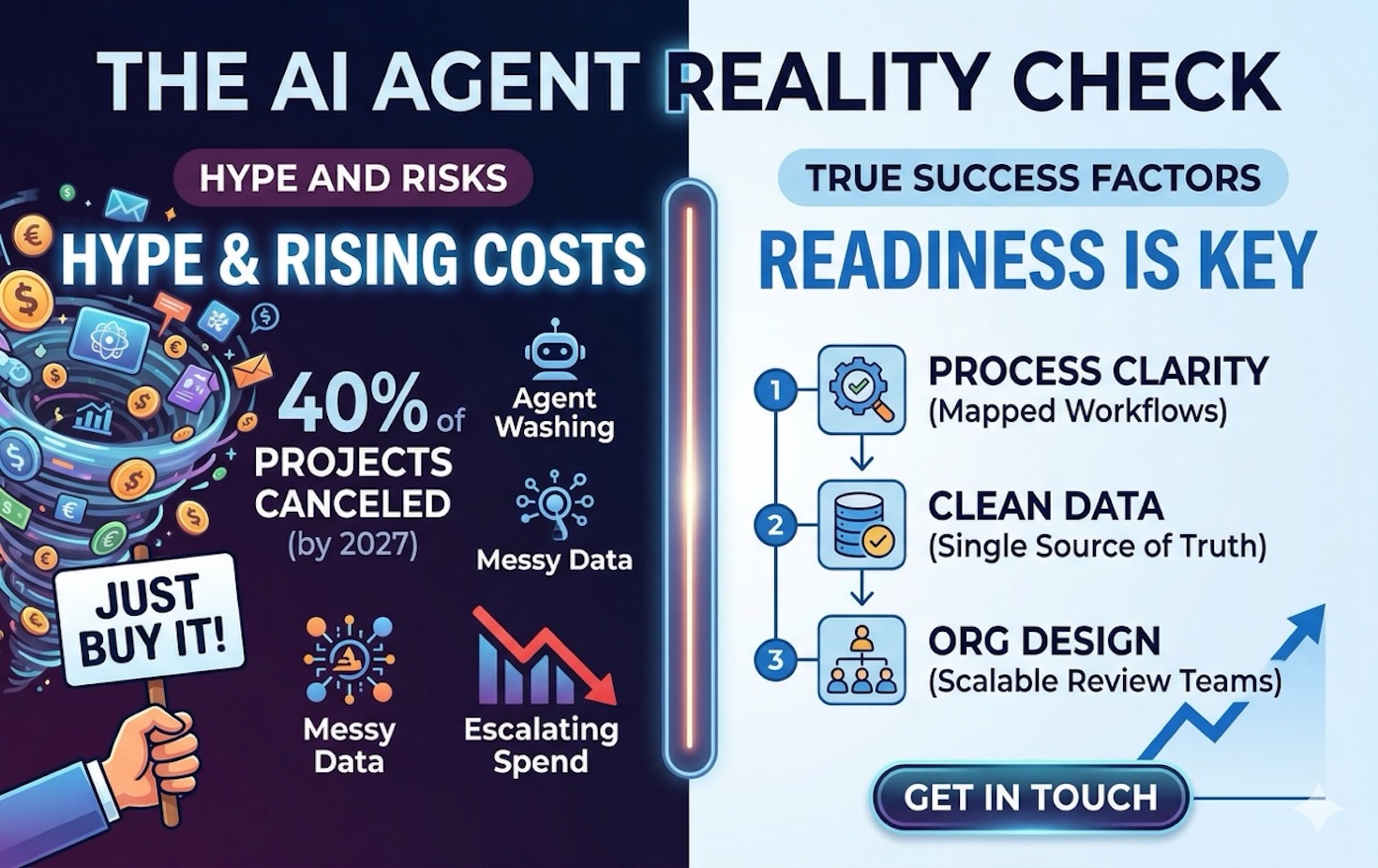

Everyone is selling AI agents right now. Anthropic is testing managed 24/7 agents for businesses. Google just shipped Jules V2 for autonomous coding tasks. Cursor launched a persistent agent window. And Gartner predicts that over 40% of agentic AI projects will be canceled by the end of 2027 — not because the technology failed, but because of escalating costs, unclear business value, and inadequate risk controls.

That second part tends to get buried in the hype. The agents are getting remarkably capable. The organizations deploying them, for the most part, are not keeping up.

The Gap Between “Demo” and “Deployed”

The most clarifying data point in the current AI agent landscape comes from Scale AI’s Remote Labor Index, which tested frontier AI agents on 240 real freelance projects from Upwork — video production, data analysis, architecture work. The best-performing agent completed just 2.5% of projects to a standard a paying client would accept.

Yet on structured benchmarks where all context is provided, those same models approach expert-level performance. The gap isn’t capability — it’s context. Tasks come with instructions handed to them. Real work requires the agent to figure out what matters, what’s missing, and what “good” looks like in a specific business environment.

For SMBs considering AI agents, this distinction matters more than any feature comparison. The question isn’t “which agent should we buy?” It’s “can our business provide the context an agent needs to succeed?”

Why “Agent Washing” Should Worry You

Gartner estimates that only about 130 of thousands of agentic AI vendors offer genuine agentic capabilities. The rest are engaged in what analysts call “agent washing” — rebranding existing chatbots, RPA tools, and AI assistants as “agents” without substantial autonomous capabilities.

This matters because the term “AI agent” has become a Rorschach test. A vendor’s “agent” might mean anything from a chatbot with better prompting to a system that autonomously executes multi-step business workflows. When a 25-person firm evaluates these tools without understanding the distinction, they’re likely to pay for an agent and receive an assistant — or worse, deploy something that takes autonomous actions without the infrastructure to keep it safe.

The practical filter: can the tool take a multi-step action across your actual systems without human intervention at each step? If not, it’s automation or assistance — useful, but not what the current hype is promising.

Three Things That Matter More Than the Agent

The pattern we see across agent deployments — from enterprise pilots to SMB experiments — is consistent. The organizations that succeed share three prerequisites that have nothing to do with which agent they chose.

1. Process clarity before automation. A Deloitte analysis published in MIT Technology Review put it sharply: “The real risk isn’t that AI won’t work — it’s that competitors will redesign their operating models while you’re still piloting agents.” The firms that get value from agents have mapped their workflows end to end — not the idealized version, but the real one with workarounds, tribal knowledge, and undocumented exception handling.

Consider a 30-person financial advisory firm that deploys an agent to handle client onboarding. If the onboarding process depends on three people checking different spreadsheets and one person who “just knows” which documents to request for certain account types, the agent will automate the official workflow and miss everything that actually makes onboarding work. The fix isn’t a better agent — it’s mapping the real process first.

2. Data that’s clean enough for machines. Humans compensate for messy data constantly — we infer what “Delta” means from context, reconcile duplicate records instinctively, and work around missing fields. Agents can’t. When an agent takes action without a human checking the output, it needs data that is close to perfect because it cannot reliably notice when data is ambiguous or wrong the way a person can.

For SMBs, this means your CRM, your project management tools, and your document repositories need to be clean before an agent touches them — not after. The firms that skip this step consistently discover on month two that their agent has been making confident decisions based on garbage data.

3. Organizational design for agent-speed throughput. If an agent 10x’s your content production, who reviews 10x the output? If it triages 50 customer tickets an hour instead of 5, who handles the escalations? Techaisle’s 2026 SMB predictions identify this as the emerging gap: by 2026, the challenge for SMBs is not acquiring agents — it’s managing a workforce of them. Agent orchestration and governance is a new technology gap that most small businesses haven’t even started thinking about.

What to Do This Week

Before evaluating any AI agent vendor, spend one hour on this: pick your most repetitive business process and document every step — including the parts that live in someone’s head. Note where data gets re-entered, where decisions depend on context that isn’t written down, and where one person’s judgment is the bottleneck.

That map will tell you whether you’re ready for an agent or whether you’d be automating chaos. And if the map reveals that the process is already clean, well-documented, and data-rich, you may have found the first place where an AI agent can genuinely help.

Common Questions About AI Agents for Small Businesses

How much do AI agents cost for a small business?

Agent costs vary enormously depending on what you’re automating. Off-the-shelf agent platforms range from $50-$500/month. Custom agent deployments for specific business workflows typically run $15K-$40K for initial setup plus ongoing compute costs. The bigger cost risk is deploying an agent before your processes and data are ready — failed agent projects average significantly more in wasted engineering time and organizational disruption than the technology itself costs.

What’s the difference between an AI agent and an AI assistant?

An AI assistant responds to requests — you ask, it answers. An AI agent takes autonomous, multi-step action across your systems: it reads a ticket, decides the priority, pulls customer history, drafts a response, and routes the escalation. The distinction matters because agents need much stronger infrastructure — clean data, clear permissions, and human oversight processes — to operate safely.

Should my business wait for AI agents to mature before investing?

Not necessarily — but your first investment should be in readiness, not technology. Map your processes, clean your data, and design your review workflows. These steps make your business more efficient regardless of AI, and they position you to deploy agents effectively when the timing is right. The AI consulting services landscape is evolving quickly, and being ready to move matters more than moving first.

What are the biggest risks of deploying AI agents too early?

The most common failure mode is automating a broken process. The agent executes confidently and quickly — but it’s executing the wrong thing, on bad data, without anyone checking the output. The result is usually discovered weeks later when a customer complains or a report doesn’t add up. The second risk is scope creep: giving an agent broad permissions without deliberate constraints, which is the organizational equivalent of handing a new employee the keys to every system on their first day.

Getting AI agents right matters more than getting them first. If you’re evaluating your options or want a second opinion on whether your business is ready, we’re happy to talk — reach out.

Getting AI agents right matters more than getting them first. If you’re evaluating your options or want a second opinion on whether your business is ready, we’re happy to talk.

Get in Touch